GCRI is a nonprofit and nonpartisan think tank that analyzes the risk of events that could significantly harm or even destroy human civilization at the global scale.

Recent Commentaries

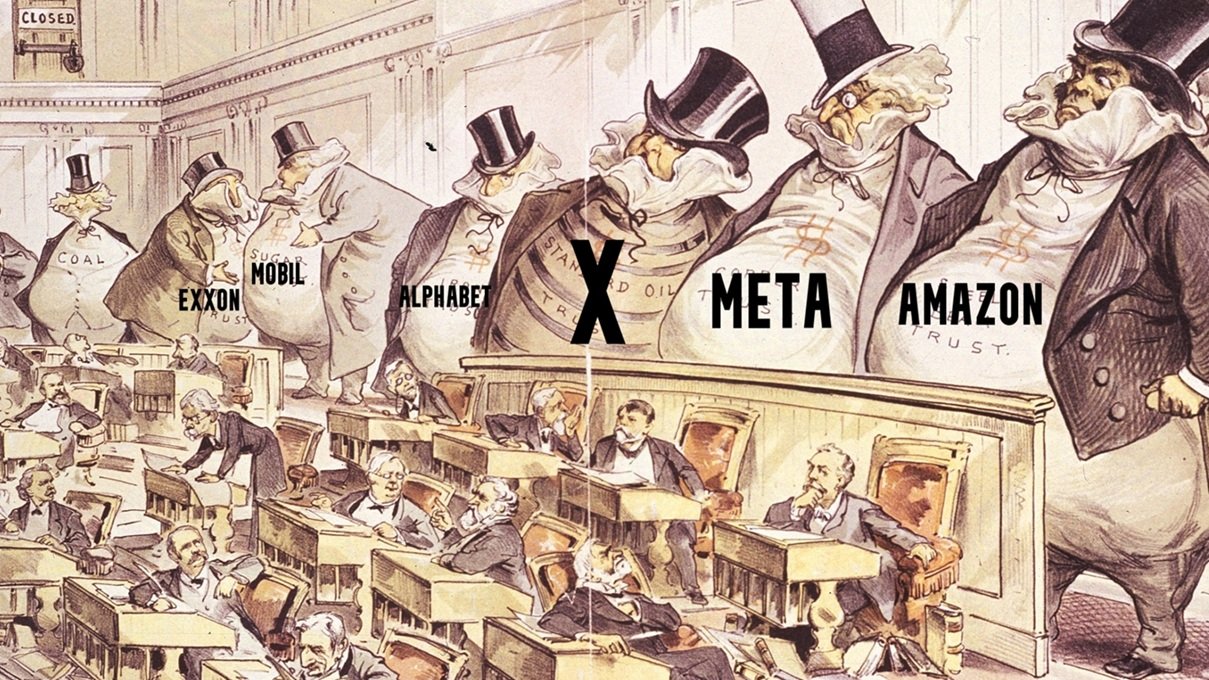

Political Corruption and Global Catastrophic Risk

Research and advocacy on global catastrophic risk may irrelevant if good policy is blocked by corrupt politicians who have sold out to private interests, especially fossil fuel companies for climate policy and tech companies for AI policy. This GCRI commentary argues that corruption is indeed a major problem in the US, but with important limits.

AI Risk and Strategy, Early 2026

The past year has seen substantial but uneven advancements in AI. This GCRI commentary finds that extreme short-term AI scenarios remain unlikely but not impossible. On strategy, the article argues for building political power on AI risk, especially to influence the post-2029 US government on domestic AI risk policy and diplomacy with China.

The Iran War and Global Catastrophic Risk

On 28 February 2026, Israel and the United States launched military attacks against Iran, commencing an ongoing war. This GCRI commentary discusses implications of the war for global catastrophic risk, including AI, climate change, and nuclear war, as well as effects on international relations and a dark lesson about high-stakes decision-making.

Ukraine and Nuclear War, 2026

The Russian invasion of Ukraine has entered its fifth year. This GCRI commentary argues for continued vigilance about the risk of escalation to nuclear war. The slow pace of change on the battlefield lessens the risk, but there are warning signs of potential change, such as a weakening Russian economy and erratic US foreign policy.

Earth-Cosmos Binary

What should be done with the cosmos? This paper, published in the journal Futures, provides the rough contours of an answer: an Earth-Cosmos Binary (ECB). An ECB preserves Earth, plus a region surrounding Earth, in more-or-less its current form, while letting the rest of the cosmos be transformed into something that may be radically superior.

The GCRI Symposium on World Peace

World peace and global catastrophic risk are two large global issues with significant overlap. Some war scenarios could result in global catastrophe, especially nuclear war. The geopolitical rivalries that can lead to wars also inhibit international cooperation on a wide range of global catastrophic risks. A world at peace may be substantially more effective at reducing global catastrophic risk. But what should that mean in practice, both for the field of global catastrophic risk and for society at large? To address this question, the GCRI Symposium on World Peace brings together experts who present diverse perspectives on world peace and what should be done about it.

Introduction to the GCRI Symposium on World Peace

The GCRI Symposium on World Peace is an expert conversation on the relationship between world peace and global catastrophic risk. This GCRI commentary introduces and summarizes the symposium. It finds world peace to be a worthwhile pursuit as long as it is pursued intelligently and responsibly, even if complete world peace is not achieved.

From Danger to Renewal: Rethinking Crisis Through a Cyclical Lens

In a time full of daunting global crises, including catastrophic risks, it may seem absurd to contemplate world peace. This GCRI commentary draws on traditional Eastern philosophy, in particular the cyclical view of history, to argue that times of crisis can set the stage for renewal. From this perspective, now is a good time to contemplate world peace.

Responsible Cosmopolitan Leadership to Advance Peace and Reduce Catastrophic Risk

Fraught international relations can cause war and other catastrophes. This GCRI commentary argues for a set of views to help countries get along with each other better. It calls for responsible management of international affairs, a cosmopolitan recognition of common humanity across all peoples, and leadership to get the ball rolling.

An International Dialogue among Catastrophic Risk Researchers

International conflict poses several catastrophic risks. This GCRI commentary argues that global catastrophic risk researchers can promote international peace and cooperation by establishing stronger international networks with their counterparts in other countries, similar to what Soviet and Western scientists did during the Cold War.

A Critique of the Goal of World Peace

World peace can be defined as the complete absence of war. This GCRI commentary argues that the goal of achieving world peace poses significant concerns, including the difficulty of eliminating all wars and the potential for inadvertent harm. It argues to instead focus on reducing war risk, facilitating international cooperation, and advancing research.

Concepts for Advancing Peace

World peace is often seen as a lofty theoretical ideal. This GCRI commentary asks, in more practical terms, What can we do? It presents 17 concepts for how to advance peace. It sees particular promise in concepts for persuading people to want to pursue peace, such as publicizing the harms of war and spreading a cosmopolitan orientation.

Recent Research

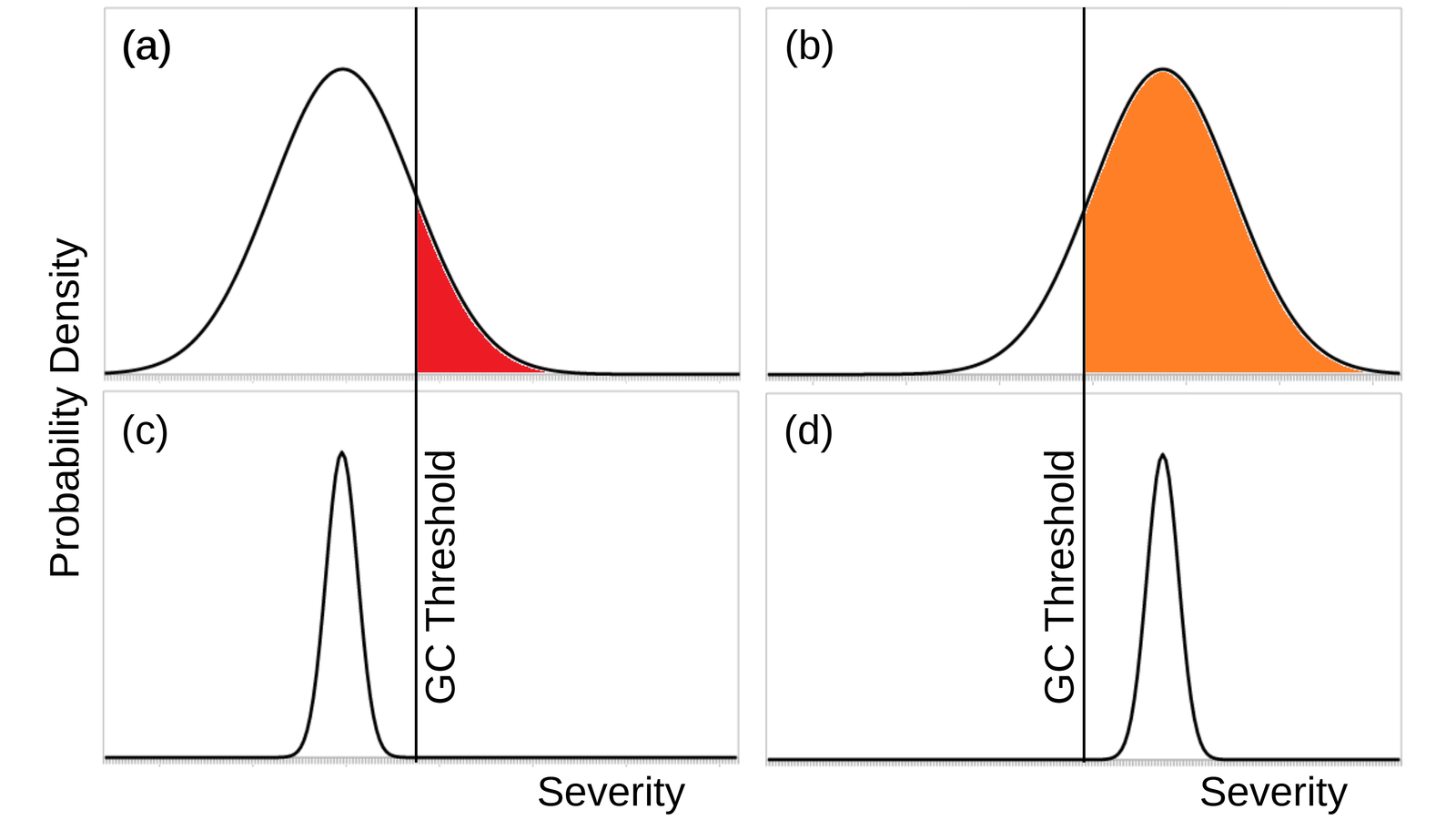

Climate Change, Uncertainty, and Global Catastrophic Risk

Is climate change a global catastrophic risk? This paper, published in the journal Futures, addresses the question by examining the definition of global catastrophic risk and by comparing climate change to another severe global risk, nuclear winter. The paper concludes that yes, climate change is a global catastrophic risk, and potentially a significant one.

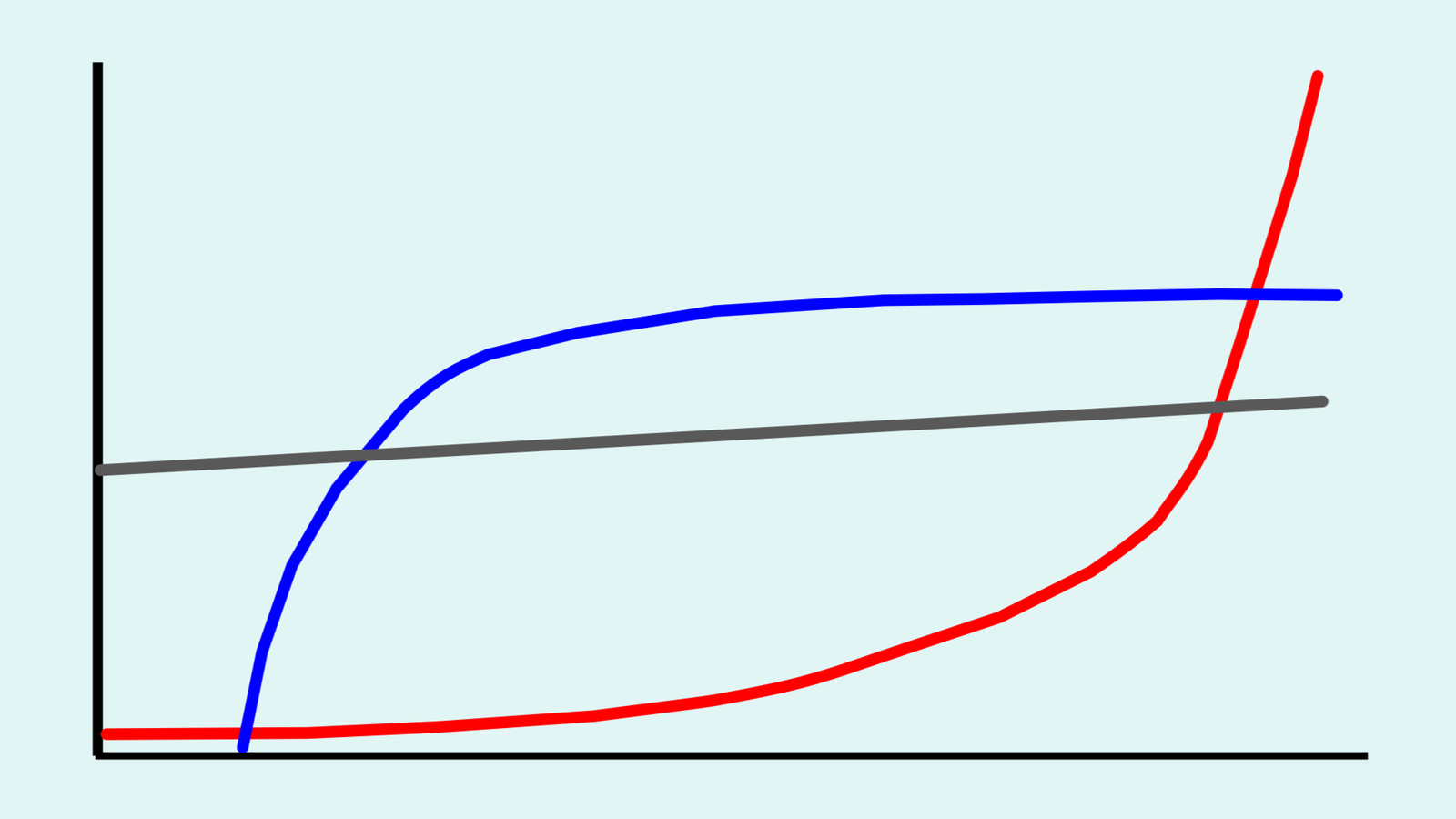

Assessing the Risk of Takeover Catastrophe from Large Language Models

For over 50 years, experts have worried about the risk of AI taking over the world and killing everyone. The concern had always been about hypothetical future AI systems—until recent LLMs emerged. This paper, published in the journal Risk Analysis, assesses how close LLMs are to having the capabilities needed to cause takeover catastrophe.

On the Intrinsic Value of Diversity

Diversity is a major ethics concept, but it is remarkably understudied. This paper, published in the journal Inquiry, presents a foundational study of the ethics of diversity. It adapts ideas about biodiversity and sociodiversity to the overall category of diversity. It also presents three new thought experiments, with implications for AI ethics.

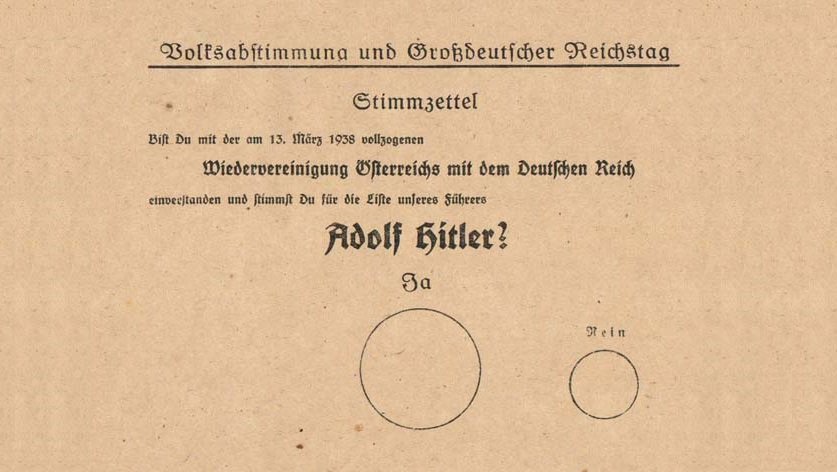

Manipulating Aggregate Societal Values to Bias AI Social Choice Ethics

AI ethics concepts like value alignment propose something similar to democracy, aggregating individual values into a social choice. This paper, published in the journal AI and Ethics, explores the potential for AI systems to be manipulated in ways analogous to sham elections in authoritarian regimes.

From the Archive

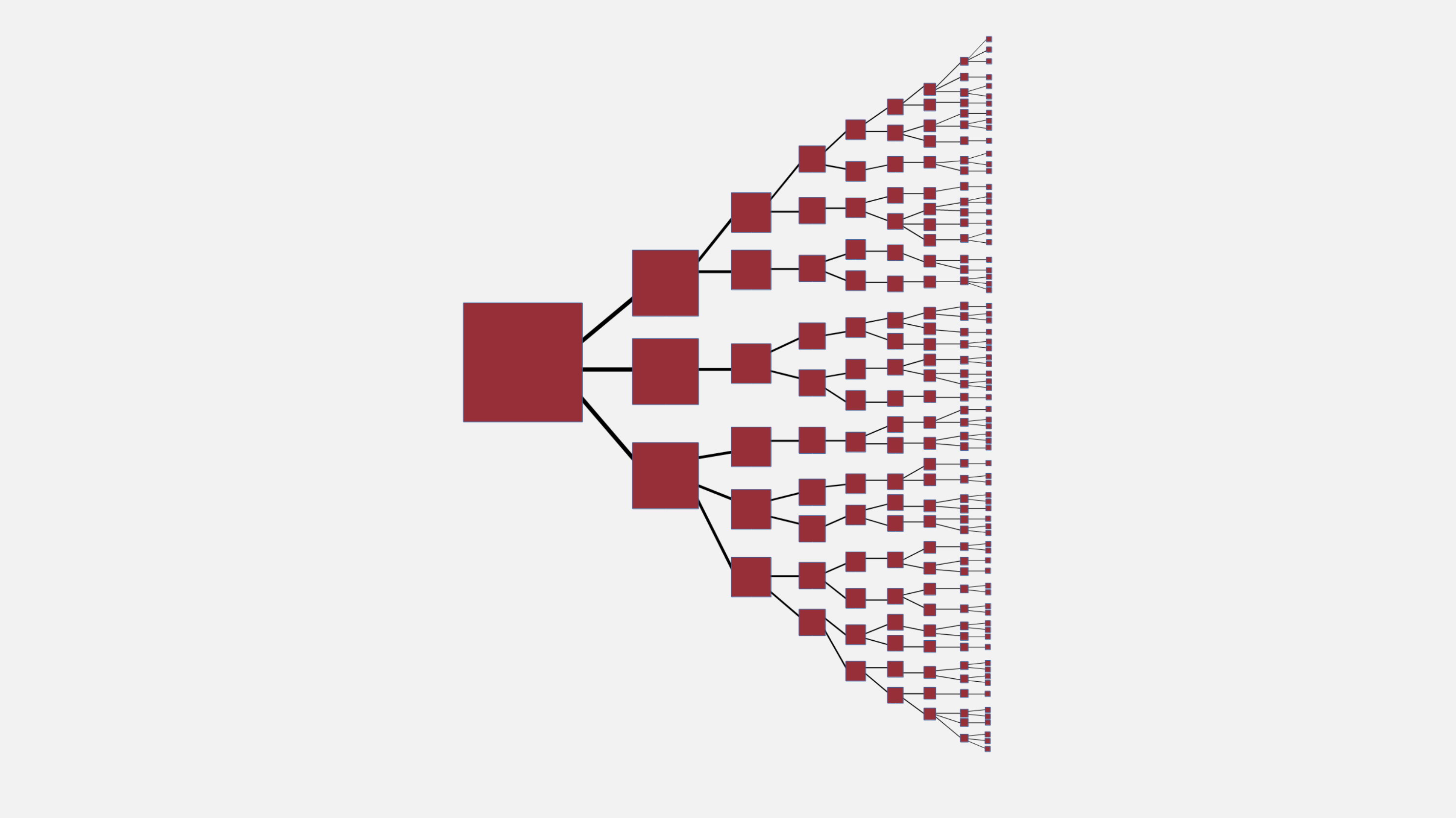

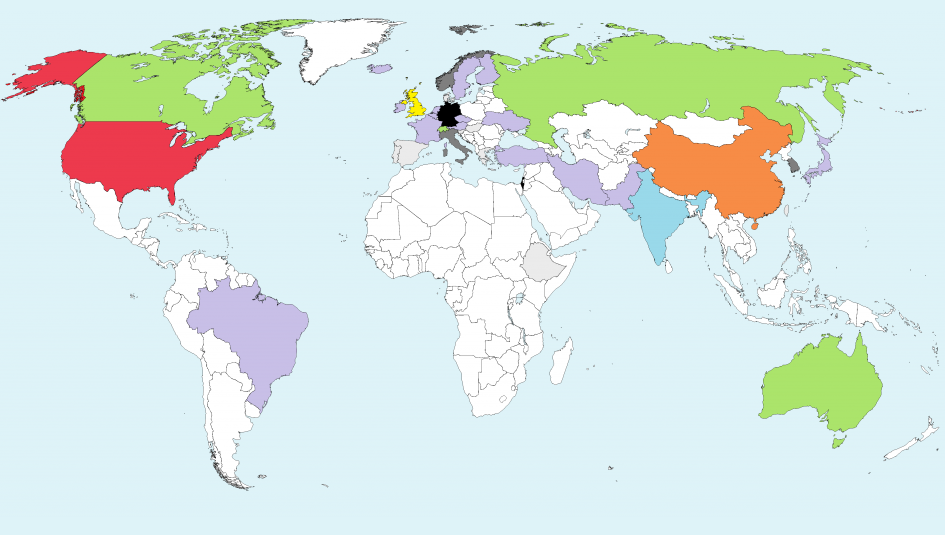

2020 Survey of Artificial General Intelligence Projects for Ethics, Risk, and Policy

An AI system with wide-ranging general intelligence could bring massive benefits or catastrophic harms, if it is built. This report surveys the global space of projects seeking to build artificial general intelligence. It finds 72 projects across 37 countries, with wide variation in parameters of relevance to AI governance.

Long-Term Trajectories of Human Civilization

What will be the fate of human civilization millions, billions, or trillions of years into the future? This paper, written by an international group of 14 scholars and published in the journal Foresight, studies several possible long-term trajectories of human civilization, including the radically good and catastrophically bad.

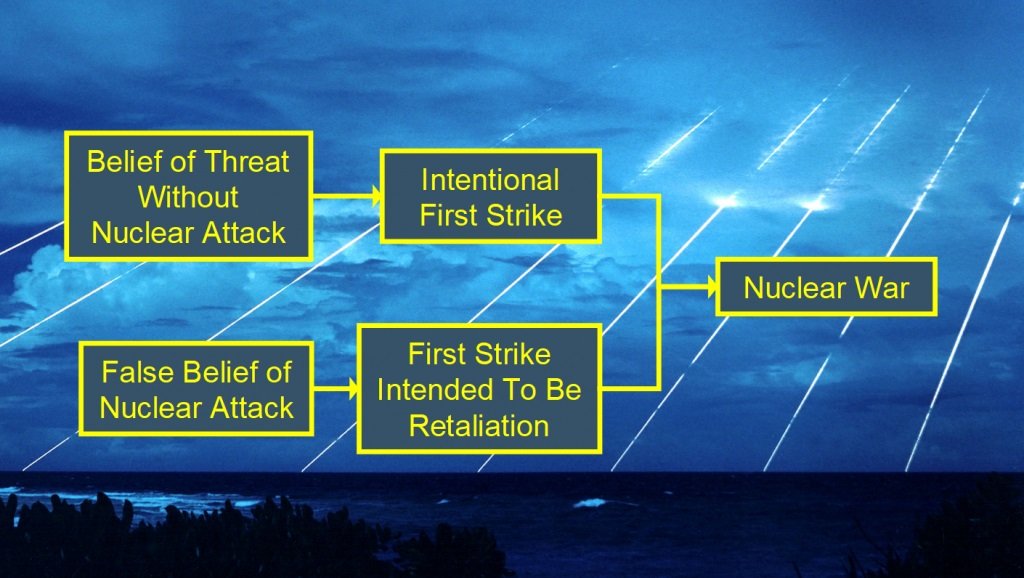

A Model for the Probability of Nuclear War

There has only been one nuclear war: World War II. That’s not enough data to calculate the probability. Instead, this GCRI report presents a probability model rooted in 14 nuclear war scenarios. It includes a dataset of 60 historical incidents, such as the Cuban missile crisis, that may have threatened to escalate into nuclear war.

The Far Future Argument for Confronting Catastrophic Threats to Humanity: Practical Significance and Alternatives

Global catastrophes could threaten humanity into the distant future, but many people don’t particularly care. This paper, published in the journal Futures, examines strategies for addressing global catastrophic risk that do not require concern about the far future. If the risks are addressed, it may not matter why people address them.

GCRI conducts interdisciplinary research across the global catastrophic risks, with emphasis on ten topics.

GCRI Topics

Concern about AI catastrophe has existed for many decades. It has recently become a lightning rod for debate alongside the growing societal prominence of AI technology. GCRI’s AI research helps to ground the discussion in the underlying character of the risk and develop solutions that help across a range of AI issues. GCRI is also active in AI ethics, providing perspective on the question of what values to build into AI systems.

Human civilization emerged during the Holocene, which for 10,000 years has brought relatively stable and favorable environmental conditions. Now, human activity is threatening those conditions, causing climate change, biodiversity loss, ecosystem destruction, and more. GCRI’s research assesses the global catastrophic risk posed by these environmental changes and advances practical solutions to reduce the risk.

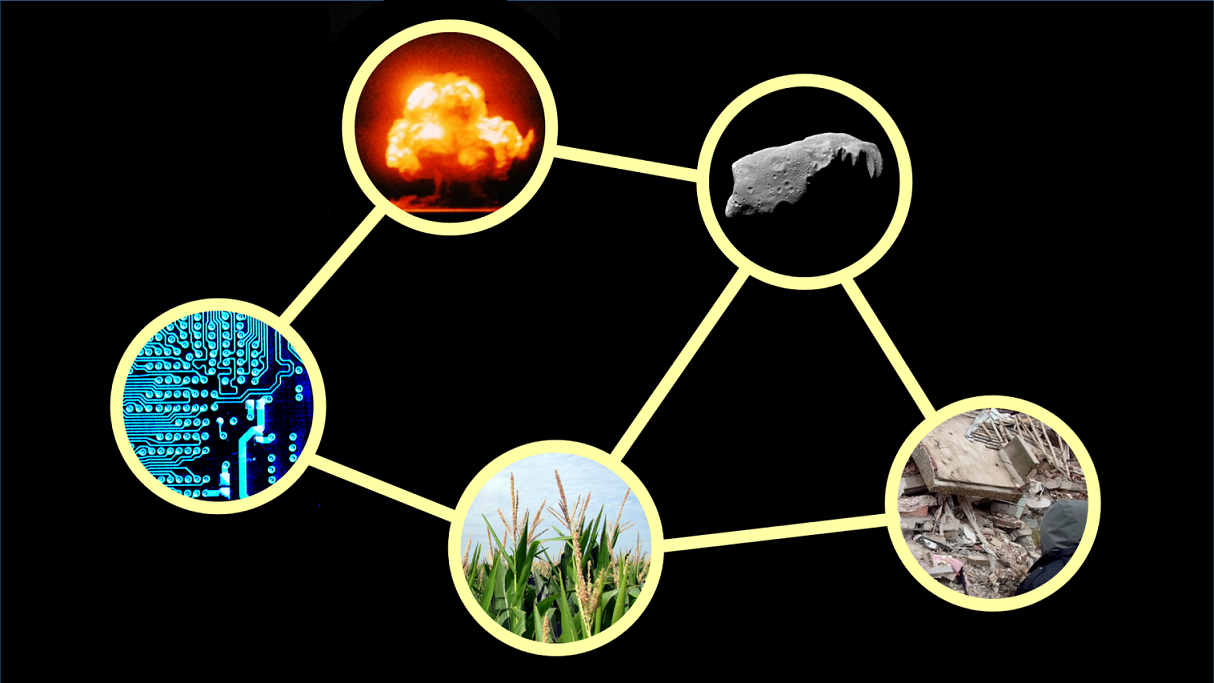

Instead of thinking in terms of specific global catastrophic risks in isolation from each other, it is often better to think holistically about the entirety of global catastrophic risk. This includes interconnections between the risks and actions that can affect multiple risks. GCRI was specifically founded as an organization dedicated to studying the whole of global catastrophic risk and this remains a priority for GCRI’s ongoing work.

Global catastrophic risk is clearly a major ethical issue, though there are many views on how much to prioritize the risk and what to do about it. GCRI’s ethics research seeks to clarify the ethical foundations of global catastrophic risk and clarify the relation between global catastrophic risk and other issues. This research aims to guide actions to address global catastrophic risk while accounting for related issues.

The world still possess about 10,000 nuclear warheads, enough to cause massive global devastation. As long as adversarial relations between nuclear-armed countries persist, there will be some chance of escalation to nuclear war. To help address the risk, GCRI contributes risk analysis of the probability and severity of nuclear war and solutions for reducing the risk, especially for global effects such as nuclear winter.

Global catastrophic risk may be of astronomical significance: a global catastrophe could prevent Earth-originating civilization from expanding into the cosmos. Additionally, there are threats from outer space to life on Earth, such as asteroids and space weather. GCRI’s research relates global catastrophic risk to the fate of the universe and analyzes space-based risks in terms of their potential for global catastrophe.

Pandemics have caused many of the most severe events in human history, COVID-19 included. Modern biotechnology can help reduce the risk while also creating new risks. GCRI’s research on pandemics and biorisk applies risk and decision analysis techniques and relates the risks to the broader domain of global catastrophic risk. GCRI has been less active on this topic but it remains an important domain to address.

Global catastrophic risk is defined by its extreme severity. The severity has two major components: the resilience of human civilization to global catastrophes and, if civilization collapses, how successfully the survivors would recover. Both of these components have been alarmingly understudied. GCRI’s research assesses the severity of global catastrophes and assesses opportunities to mitigate them.

Decision analysis can help to identify decision options and their consequences, including their effect on risks. Risk analysis can characterize potential harms and quantify them in terms of their probability and severity. GCRI applies risk and decision analysis methods to global catastrophic risk. GCRI’s approach balances between the value of quantitative analysis and the pitfalls of quantifying deeply uncertain parameters.

To successfully reduce global catastrophic risk, it is necessary to formulate solutions that would reduce the risk if implemented and that will actually be implemented. This often involves engaging with people and institutions that may not have global catastrophic risk as a priority. GCRI’s research develops solutions that account for the priorities of the relevant decision-makers to achieve significant reductions in the risk.

Founded in 2011, GCRI is one of the oldest active organizations working on global catastrophic risk. We provide intellectual and moral leadership to support the broader field of global catastrophic risk.

About GCRI

About

Read about GCRI’s mission, history, organization structure, finances, and more.

People

The GCRI team is led by senior experts in the field of global catastrophic risk.

Official Statements

GCRI publishes occasional statements about important current events, issues within the field, and organizational matters.

Press

GCRI welcomes inquiries from journalists and creators to advance public understanding of global catastrophic risk.

Join GCRI and many others around the world in helping to address humanity’s gravest threats.

How to Get Involved

Learn More

Continue reading the GCRI website to learn more about GCRI, about global catastrophic risk, and more.

Stay Informed

View GCRI publications and organization updates or subscribe to the GCRI newsletter.

Connect

GCRI’s annual Advising and Collaboration Program welcomes people interested in global catastrophic risk.

Donate

GCRI is nonprofit and relies on grants and donations to fund its activities. Visit our donate page to contribute.